Imagine a terrifying scenario. It is the morning of a crucial election in 2026. You scroll through your social feed and see a video of a presidential candidate making a shocking, xenophobic statement. The audio is crisp. Furthermore, the lip movements match the phonemes perfectly. Even the lighting reflects naturally off the candidate’s skin. Consequently, you share it with your family group chat. Within minutes, the video has three million views.

However, that candidate never said those words. In fact, they were on a cross-country flight with no Wi-Fi at the time. You just fell for a high-fidelity deepfake.

This is not science fiction. On the contrary, it is our current reality. From the 2024 New Hampshire robocalls impersonating President Biden to the 2025 Romanian investment scams using AI-cloned ministers, synthetic media is the new front line of information warfare. As of early 2026, the volume of AI-generated content has reached a tipping point where organic reality is now the minority.

The Mechanics of Deception: Inside the Black Box.

To defeat a deepfake, you must first understand its architecture. Fundamentally, these are not “edited” videos in the traditional sense. They are mathematically generated realities.

Generative Adversarial Networks (GANs)

The engine powering most high-end deepfakes is the Generative Adversarial Network (GAN). Imagine two AI agents locked in an eternal game of cat-and-mouse:

The Generator (The Forger): This AI attempts to create a fake image or video frame.

The Discriminator (The Detective): This AI evaluates the image against a database of millions of real photos.

The process is iterative and relentless. The Forger creates an image. The Detective rejects it, saying, “The shadows on the nose are wrong.” The Forger adjusts and tries again. This loop repeats millions of times until the Detective can no longer distinguish the forgery from the original. Ultimately, the resulting video is often indistinguishable to the human eye.

Diffusion Models & Voice Cloning

While GANs dominate face-swaps, Diffusion Models (the tech behind Sora and Midjourney) are now generating entire scenes from scratch. Additionally, audio deepfakes use Neural Voice Cloning. As of 2026, an AI needs only a three-second clip of your voice to clone it with 99% accuracy, capturing your unique emotional cadence and room acoustics.

The Psychology of Belief: Why We Fall for It

Technology is only half the equation. The other half is human biology. Deepfakes hack our brains just as effectively as they hack our screens.

The “Truth Default” Theory

Psychologists argue that humans have a “truth default.” Basically, we are evolved to believe what we see and hear. For 200,000 years, if you saw a lion, there was a lion. We have not yet evolved the cognitive hardware to doubt high-definition video. Consequently, when we see a politician accepting a bribe in 4K, our primitive brain accepts it as fact before our logical brain can intervene.

Confirmation Bias & Cognitive Ease

Deepfakes are rarely designed to change your mind. Instead, they are designed to confirm what you already suspect.

If you dislike Candidate A, a deepfake of them saying something offensive feels “true” because it aligns with your internal narrative.

Furthermore, the brain loves “cognitive ease.” If a piece of information is easy to process and fits your worldview, you are far less likely to scrutinize it.

The Liar’s Dividend

Perhaps the most insidious effect of deepfakes is not that we believe lies, but that we stop believing the truth. This is known as the Liar’s Dividend.

When the public becomes aware that anything can be faked, bad actors can dismiss genuine evidence of wrongdoing as “AI-generated slop.”

Example: In 2025, a real recording of a corporate scandal was dismissed by the CEO as an “AI fabrication.” Because the public was already paranoid, the scandal fizzled out. Thus, the mere existence of the technology erodes the foundation of accountability.

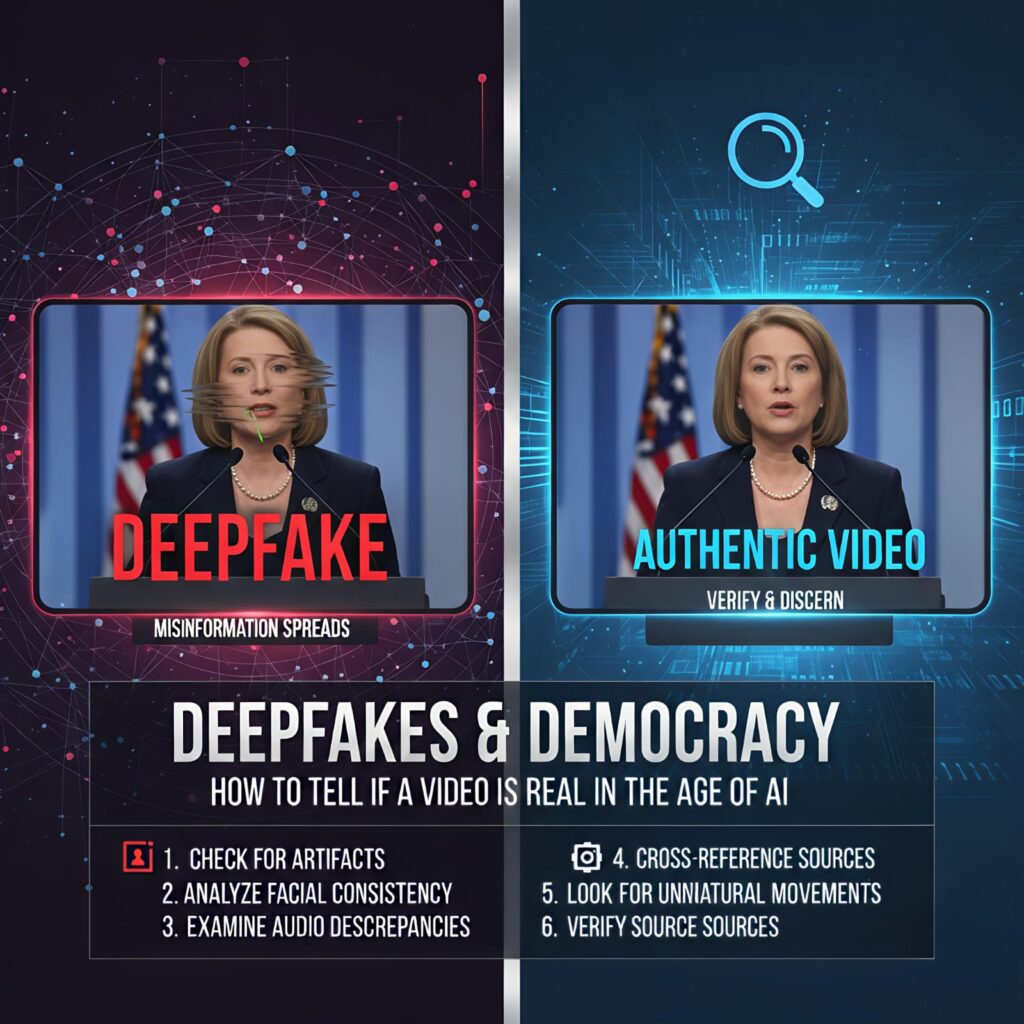

The 3-Second Scan: Manual Detection Techniques

You do not always need a supercomputer to spot a fake. AI models, despite their sophistication, leave behind digital artifacts. You simply need to know where to look. Use this checklist for your daily browsing.

A. The Eyes: Glitches in the Soul

The human eye is incredibly complex. AI struggles to replicate its fluid physics.

Inconsistent Blinking: Humans blink spontaneously. Early deepfakes did not blink at all. In contrast, 2026 models might blink excessively or in a fluttery, robotic pattern.

The Reflection Test: Zoom in on the pupils. In a real video, the reflection of the environment should be identical in both eyes. In generated videos, the reflection in the left eye often does not match the right.

Gaze Orientation: Does the person’s eye line match the person they are speaking to? Deepfake eyes often stare vaguely into the “middle distance” (the “dead-eye” stare).

B. The Mouth: The “Plosive” Problem

Lip-syncing is the hardest part of video generation. Specifically, look at the mouth when the speaker says words with B, M, or P. These are “plosive” sounds that require the lips to close fully. Deepfake lips often “smear” or remain slightly open during these sounds. Additionally, look at the teeth. AI often renders them as a solid white bar rather than individual, slightly asymmetrical teeth.

C. Boundary Artifacts and Physics

The Neck and Jawline: This is the “seam” where the digital mask is stitched onto the body. Look for blurring or “jittering” when the person turns their head quickly.

Ear Jewelry: AI struggles with jewelry. If a person is wearing earrings, watch if they stay attached to the ear or if they “float” slightly when the person moves.

Skin Texture: AI skin is often too smooth—like a wax figure. Real skin has pores, fine lines, and tiny imperfections. If the subject looks airbrushed to perfection, be skeptical.

Advanced Forensics: The Heartbeat Check (rPPG)

For high-stakes verification—such as a war-zone video or a presidential address—manual checks are insufficient. This is where we turn to biological signals.

Remote Photoplethysmography (rPPG)

Every time your heart beats, it pumps blood into the vessels of your face. This causes your skin to change color very slightly—becoming redder with oxygenated blood. This change is invisible to the naked eye but can be detected by specialized software.

Real Video: A forensic algorithm can detect a consistent, rhythmic pulse across the forehead and cheeks (e.g., 72 BPM).

Deepfake Video: Historically, deepfakes lacked this blood flow signal entirely.

The 2026 Challenge: Recent research indicates that high-end deepfakes are starting to “inject” a fake pulse signal. However, they often get the spatial distribution wrong. In a real human, blood flows into different parts of the face at slightly different millisecond intervals. In a fake, the whole face might “pulse” simultaneously.

The Digital Nutrition Label: C2PA and Watermarking

The tech industry’s primary defense is Content Provenance. Instead of trying to catch the fake, they are trying to prove the “real.”

The C2PA Standard

The Coalition for Content Provenance and Authenticity (C2PA) has created a “digital nutrition label” for media.

How it works: When a photo is taken with a C2PA-compliant camera (like new models from Nikon or Leica), a secure cryptographic hash is attached to the file.

The History: This metadata records every edit. If the photo was cropped in Photoshop, it is recorded. If an AI filter was applied, it is flagged.

Verified Content: By 2026, most major news sites like the BBC and New York Times have a small “CR” (Content Credentials) icon on their images. When you click it, you can see the original “capture” data. If a video lacks this history, it should be treated as high-risk.

Global Case Studies: When Democracy Was Hacked

To understand the threat, we must examine where it has already breached our defenses.

The 2023 Slovakia Election: The 48-Hour Trap

Just 48 hours before the Slovakian election, an audio recording went viral. It featured a liberal candidate discussing how to rig the election by buying votes.

The Reality: It was an AI-generated deepfake.

The Impact: Because of a “media silence” law that forbids campaigning two days before a vote, the candidate could not legally go on TV to debunk it. He lost the election by a razor-thin margin. Consequently, this proved that timing is everything.

The 2024 Keir Starmer Deepfake (UK)

During the UK party conference season, a clip surfaced of Labour leader Keir Starmer swearing at his staff.

The Reaction: The video felt “real” because Starmer is often viewed as overly formal; a video of him “losing it” played into a hidden public curiosity.

The Lesson: Even “low-stakes” deepfakes that just humiliate a person can shift public perception over time.

The 2025 “Ghost” Candidates of Indonesia

In a massive disinformation campaign, over 1,100 deepfake videos were used to create “synthetic candidates” for local offices. These avatars held virtual rallies and answered questions in real-time. As a result, actual humans found it impossible to compete with the 24/7 availability of their AI counterparts.

The 2026 Legal Landscape: Regulation vs. Innovation

As of early 2026, the legal world is finally catching up to the speed of silicon.

The EU AI Act (August 2026 Deadlines)

The EU AI Act is the most ambitious regulation in the world.

Transparency Obligations: By August 2, 2026, any company deploying an AI system that generates deepfakes must clearly label the content as artificially generated.

Fines: Non-compliance can result in fines up to €35 million or 7% of global turnover. Consequently, the era of “stealth AI” in Europe is coming to a close.

The US DEFIANCE Act (2026)

In the United States, the DEFIANCE Act recently passed the Senate. This law provides a civil cause of action for victims of non-consensual AI-generated intimate imagery. Furthermore, several US states (California, Colorado) have enacted laws requiring “reasonable care” to avoid algorithmic discrimination and disinformation in election cycles.

Actionable Checklist: Your Verification Protocol

Do not let a viral video bypass your logic. Instead, follow this 5-step protocol before clicking “Share.”

Check the “CR” Icon: Does the media have C2PA Content Credentials? If not, investigate further.

The “Reverse Search” Strategy: Take a screenshot of a frame and upload it to Google Lens or TinEye. Often, you will find the original, un-manipulated video from years ago.

Cross-Verify with “Apex” Sources: If a world leader said something truly world-changing, it would be on the AP Wire, Reuters, and the BBC simultaneously. If it is only on a random Twitter account, it is likely fake.

Listen for “Clipping”: Audio deepfakes often have “artifacts”—tiny metallic clicks or sudden shifts in background noise. Listen with headphones.

Identify the Emotional Trigger: If a video makes you feel intense rage or vindication, you are being targeted. Disinformation thrives on high-arousal emotions.

Tools for Your Forensic Toolkit

You can use technology to fight technology. Currently, these tools are the best available for public use:

| Tool | Purpose | Best For |

| Reality Defender | Detection Suite | Enterprise-grade scanning of audio and video. |

| Hive Moderation | AI Detection | Checking if a social media post was AI-written or generated. |

| Sensity AI | Deepfake Alerts | Monitoring the web for coordinated deepfake campaigns. |

| Truepic | Provenance | Verifying photos at the point of capture using C2PA. |

| InVID WeVerify | Browser Plugin | A must-have for journalists to debunk viral videos. |

The Future: Deepfakes in the 2026 Midterms and Beyond

The technology is moving toward Real-Time Generative Video.

In the 2026 US midterm elections, we expect to see “Interactive Deepfakes”—bots that call you on the phone, look like your local representative, and have a personalized conversation with you to sway your vote.

However, we are also seeing the rise of “Human-Centric Content.” As AI slop floods the internet, the value of authenticated, human-made content is skyrocketing. As a result, the most successful brands and politicians in 2026 will be those who lean into transparency, raw live-streaming, and verified credentials.

Conclusion: Reclaiming the Truth

Deepfakes are the greatest challenge to our shared reality since the invention of the printing press. Undoubtedly, they will get better. They will get faster. Nevertheless, they are not invincible.

The most powerful tool in the fight against disinformation is not an algorithm; it is a skeptical, educated mind. By understanding the “plosive” glitch, the “dead-eye” stare, and the cryptographic trail of a file, you protect yourself and your community.

Democracy relies on a shared set of facts. When we lose those facts, we lose our ability to self-govern. Therefore, the next time you see a “shocking” video, pause. Look for the “CR” icon. Check the reflections in the eyes.

Stay curious. Stay skeptical. And always verify before you share.